ASI-Evolve: AI Accelerates AI

Abstract

Can AI accelerate the development of AI itself? While recent agentic systems have shown strong performance on well-scoped tasks with rapid feedback, it remains unclear whether they can tackle the costly, long-horizon, and weakly supervised research loops that drive real AI progress. We present ASI-Evolve, an agentic framework for AI-for-AI research that closes this loop through a learn–design–experiment–analyze cycle. ASI-Evolve augments standard evolutionary agents with two key components: a cognition base that injects accumulated human priors into each round of exploration, and a dedicated analyzer that distills complex experimental outcomes into reusable insights for future iterations.

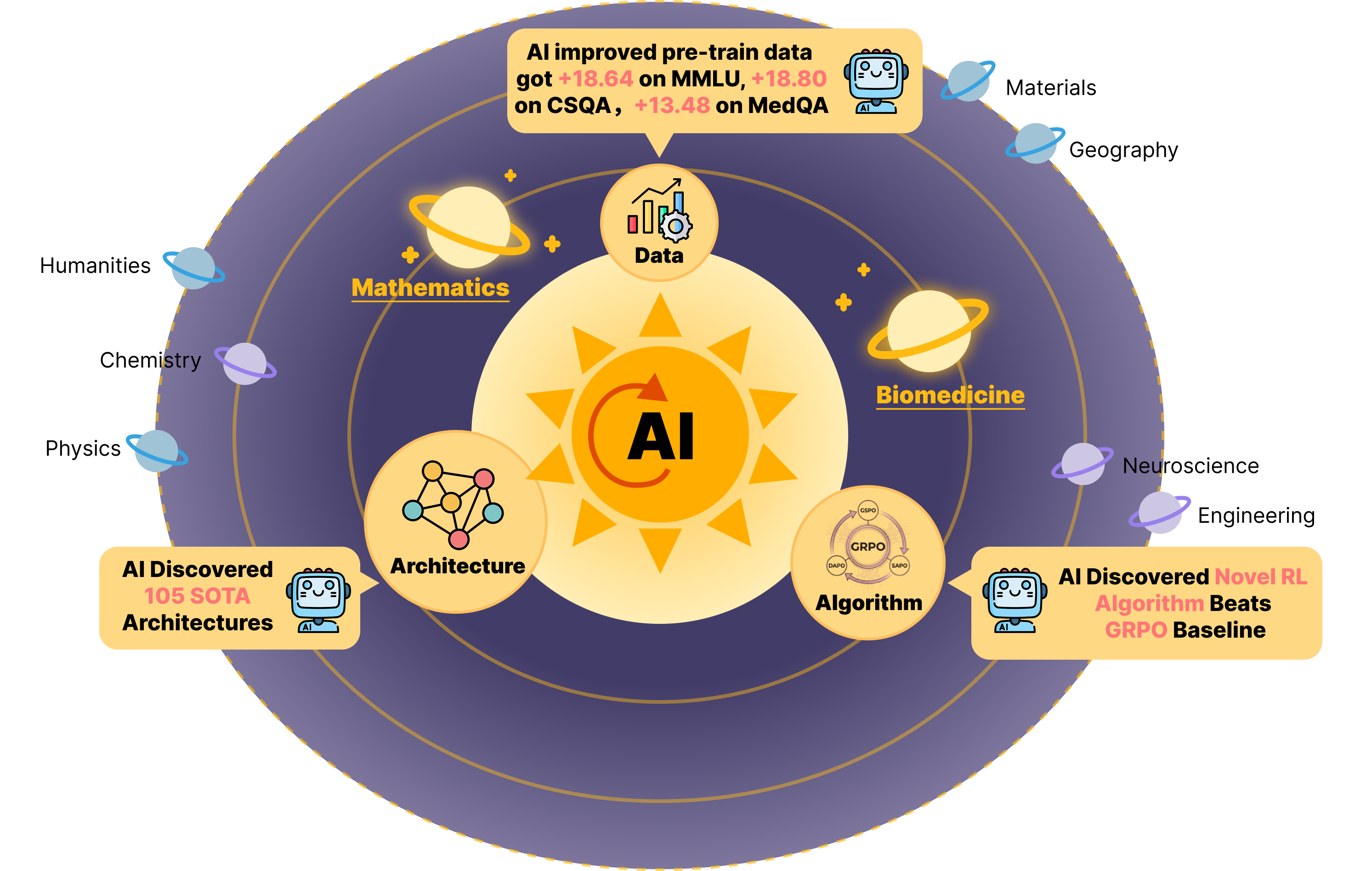

To our knowledge, ASI-Evolve is the first unified framework to demonstrate AI-driven discovery across three central components of AI development: data, architectures, and learning algorithms. In neural architecture design, it discovered 105 SOTA linear attention architectures, with the best discovered model surpassing DeltaNet by +0.97 points, nearly 3× the gain of recent human-designed improvements. In pretraining data curation, the evolved pipeline improves average benchmark performance by +3.96 points, with gains exceeding 18 points on MMLU. In reinforcement learning algorithm design, discovered algorithms outperform GRPO by up to +12.5 points on AMC32, +11.67 points on AIME24, and +5.04 points on OlympiadBench.

We further provide initial evidence that this AI-for-AI paradigm can transfer beyond the AI stack through experiments in mathematics and biomedicine. Together, these results suggest that ASI-Evolve represents a promising step toward enabling AI to accelerate AI across the foundational stages of development, offering early evidence for the feasibility of closed-loop AI research. The ASI-Evolve is fully open-sourced at https://github.com/GAIR-NLP/ASI-Evolve.

1. Introduction: The Challenge of Self-Improving AI

Artificial intelligence (AI) advances through many interacting factors; data, model architectures, and learning algorithms are three central research components. Progress in each of these directions depends on repeated cycles of hypothesis generation, implementation, experimentation, and analysis. In practice, however, these cycles are constrained by multidimensional human bottlenecks: the hypothesis space humans can explore in parallel is severely limited, experimental workflows demand substantial manual effort and frequent intervention, and the accumulation of insights across iterations often depends on individual experience and intuition, making knowledge difficult to systematically preserve and transfer. Together, these constraints fundamentally limit the pace and scale of progress in AI development, raising a central question: can AI accelerate the development of AI itself?

The Evolution of AI in Scientific Discovery

Recent advances in AI capabilities have made this possibility increasingly plausible. The role of AI in scientific discovery has evolved rapidly: from specialized systems that solve discrete, well-defined problems such as AlphaFold (protein structure prediction), GraphCast (weather forecasting), and GNoME (materials discovery), to LLM-based and agentic systems that support broader scientific workflows. Systems such as SciMaster focus on scientific question answering with known answers; ML-Master and MLEvolve address bounded optimization problems under fixed evaluation criteria; and AI Scientist automates the research publication pipeline rather than tackling open-ended frontier research.

AlphaEvolve takes an important step toward autonomous scientific optimization by iteratively improving candidate solutions through coding agents. Yet the research loops that drive real AI progress remain substantially harder to automate: improving architectures, data pipelines, or training algorithms typically requires modifying large codebases, running costly experiments, interpreting multidimensional outcomes, and sustaining coherent exploration across many rounds. Existing frameworks have not yet demonstrated that AI can operate effectively in this regime in a unified way, nor that it can generate meaningful advances across the three foundational pillars of AI development rather than within a single narrowly scoped setting.

The ASI-Evolve Framework

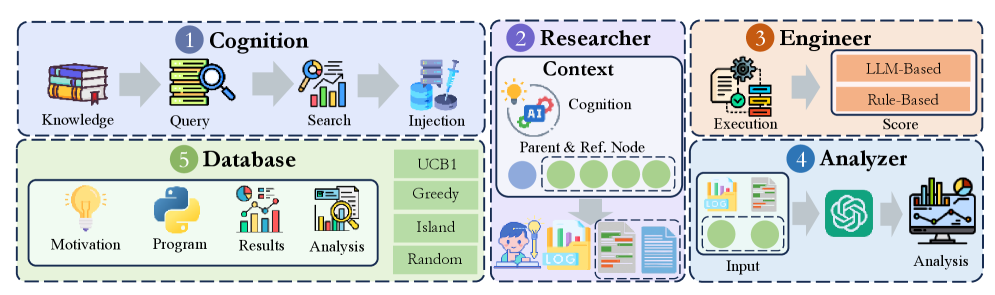

To address this gap, we present ASI-Evolve, an agentic framework for AI-for-AI research. The general scientific process follows a principled cycle: researchers collect extensive background literature, formulate informed hypotheses, execute experiments, and distill insights through systematic analysis. Inspired by this workflow, ASI-Evolve closes the loop between prior knowledge, hypothesis generation, experimental execution, and iterative refinement through a learn–design–experiment–analyze cycle.

Two components are central to this design:

- Cognition Base: A structured knowledge repository that grounds each round of exploration in accumulated human research literature from the outset, allowing the system to build on domain knowledge rather than search from scratch.

- Analyzer: A dedicated module that translates complex multi-dimensional experimental outcomes into structured, actionable insights that are written back into the experience database for future iterations.

Together, these components enable sustained improvement on long-horizon AI research tasks where feedback is expensive, indirect, noisy, and difficult to interpret, substantially improving both the speed and quality of the evolution process.

Key Results Across Three Pillars

Using ASI-Evolve, we demonstrate that AI can accelerate multiple parts of its own development stack. To our knowledge, this is the first unified demonstration of AI-driven discovery across three central components of AI development: data, architectures, and learning algorithms.

(1) Model Architecture: In neural architecture design, ASI-Evolve autonomously generated 1,350 candidates across 1,773 exploration rounds, discovering 105 architectures that surpass the human-designed DeltaNet; its top-performing model achieved a +0.97 point gain, nearly triple the improvement of recent manual SOTA advancements.

(2) Data Curation: In pretraining data curation, evolved strategies produced cleaner training datasets, improving over the original data by 3.96 points on average benchmarks, with particularly strong gains on knowledge-intensive benchmarks such as MMLU, where improvements exceeded 18 points.

(3) Training Algorithm: In reinforcement learning algorithm design, the framework derived novel optimization mechanisms with principled mathematical innovations that outperform the competitive GRPO baseline by up to +12.5 points on AMC32, +11.67 points on AIME24, and +5.04 points on OlympiadBench.

We further validate the effectiveness of ASI-Evolve through targeted comparisons and ablation studies. On the circle packing task used as a shared benchmark across evolutionary frameworks, ASI-Evolve finds SOTA-level results in as few as 17 rounds, substantially outpacing prior frameworks including OpenEvolve and GEPA. To examine whether AI-designed components provide utility beyond the AI/ML stack, we additionally apply ASI-Evolve to drug-target interaction prediction, a biomedical domain distinct from AI development, where the evolved architecture achieves a 6.94-point AUROC improvement in cold-start generalization scenarios.

2. Understanding Task Complexity: The L_task Framework

To systematically position existing work relative to ASI-Evolve, we introduce Scientific Task Length (L_task) as an analytical framework characterizing the intrinsic challenge of autonomous scientific research tasks along three dimensions:

- Execution Cost (C_exec): Measures the computational resources and engineering complexity required per trial, including the burden of modifying large interdependent codebases and the GPU hours consumed.

- Search Space Complexity (S_space): Captures the complexity of the solution space the system must navigate, including the openness of the task objective, whether candidate solution boundaries are predefined, and the extent to which meaningful exploration directions must be discovered rather than given.

- Feedback Complexity (D_feedback): Measures the difficulty of extracting actionable insights from experimental outcomes, reflecting how much the system must synthesize multi-dimensional signals such as loss dynamics, benchmark distributions, and efficiency traces, rather than simply responding to a scalar score.

We characterize task complexity as L_task = ⟨C_exec, S_space, D_feedback⟩ and use this lens to survey existing work, ranging from simple scientific question answering to large-scale scientific exploration.

The ASI-Evolve Advantage

Neural architecture design, pretraining data curation, and training algorithm design are foundational to AI progress, and represent three central components that ASI-Evolve targets. Validating a single candidate requires complete model training consuming tens to hundreds of GPU hours, often involving deep modifications to large interdependent codebases. The exploration space is broad and open-ended, spanning diverse design choices with no predefined boundaries. Experimental feedback spans multiple benchmarks, loss dynamics, and efficiency metrics, all of which must be jointly interpreted to guide the next iteration.

These properties impose unique demands on any system that attempts to automate such research. Each experimental trial is costly and opportunities for iteration are limited, meaning the system cannot afford to explore blindly. Prior domain knowledge must therefore be incorporated from the outset to steer exploration toward promising directions, motivating the Cognition Base in ASI-Evolve. At the same time, the richness of experimental feedback calls for dedicated interpretation: raw signals across benchmarks and training dynamics must be distilled into actionable insights before the next iteration can proceed, motivating the structured Analyzer.

3. How ASI-Evolve Works

ASI-Evolve is implemented as an end-to-end experimental evolution pipeline. Each iteration proceeds through four stages: (i) learn relevant knowledge and historical experience respectively from cognition storage and database, (ii) design the next candidate program, (iii) execute an experiment to obtain evaluation signals, and (iv) analyze outcomes into reusable, human-readable lessons.

3.1 Researcher Module

The Researcher generates the next candidate program given the task description, sampled context nodes, and retrieved cognition items. Each round it begins by sampling n nodes from the database, and then retrieving a small set of cognition items by semantic search over the sampled nodes' analyses or motivations to provide additional priors.

Conditioned on this context, the Researcher uses an LLM to produce a complete program together with a natural-language motivation, which are stored together as a new node for subsequent rounds. In addition to full-code generation, the system also supports an optional diff-based editing mode, where the model proposes localized modifications over a parent program; this incremental style is particularly helpful when evolving larger codebases over many rounds.

3.2 Engineer Module

The Engineer executes the candidate program in the actual experiment environment and produces the quantitative evaluation signal used for evolution. Given a generated program, it invokes a user-specified evaluation procedure that runs the experiment end-to-end and returns structured metrics, including a primary scalar score that serves as the fitness signal.

To better handle long-horizon tasks, the Engineer supports early rejection via configurable wall-clock limits and lightweight quick tests, improving efficiency by filtering flawed candidates before expensive runs. It can also optionally invoke an LLM-based judge to cover aspects of candidate quality that are difficult to capture with rule-based metrics alone, combining its score with the primary metric.

3.3 Analyzer Module

In our setting, the primary feedback used for selection is a scalar score produced by a task-specific evaluation, but the same run also yields rich auxiliary signals—multiple metrics, feature importances, training logs, and execution traces—that are useful for diagnosis yet too verbose to feed directly into subsequent rounds. This is particularly pronounced in the complex, large-scale tasks we target, where a single experiment may generate extensive logs spanning training dynamics, benchmark breakdowns, and efficiency traces.

The Analyzer is designed to handle this asymmetry: it receives the current program together with the full experimental output—including raw logs and detailed metrics—and distills them into a compact, decision-oriented report. This full exposure allows the Analyzer to perform thorough causal analysis; the resulting report will be persisted in the database and used for retrieval in subsequent rounds, keeping context length manageable without sacrificing analytical depth.

3.4 Cognition Base

For long-horizon research tasks, exploration from scratch offers a larger hypothesis space but incurs substantial resource and time cost. We therefore introduce a cognition base that encodes human prior knowledge—task-relevant heuristics, known pitfalls, and design principles drawn from domain literature and prior runs—so that the system can be steered toward promising directions and iterate efficiently rather than rediscovering well-documented failure modes.

In each round, after sampling context nodes from the database, the pipeline uses the sampled nodes' information as queries to retrieve a small set of similar cognition entries via embedding-based semantic search; these entries are then injected into the Researcher's context to guide hypothesis generation. Our experiments show that equipping the loop with this cognition base significantly improves cold-start climb speed and iteration efficiency, without limiting long-term exploration capability.

3.5 Database

The database is the system's persistent memory: it stores the outcome of each evolution round and supplies the sampled nodes that form the Researcher's context. Whereas the cognition base provides fast-start prior knowledge, the historical nodes in the database convey task-specific information and become the dominant information source as evolution progresses, supporting sustained improvement beyond the initial climb.

Each evolution step produces a node that stores: (i) the Researcher motivation, (ii) the generated program, (iii) structured results from the evaluation script, (iv) analysis report, and (v) auxiliary metadata such as runtime and success flag. For sampling, to support comparison and flexible deployment we encapsulate multiple policies behind a unified interface—UCB1, random, greedy, and MAP-Elites island algorithm.

4. Main Tasks and Results

We apply ASI-Evolve to three central components of AI development—model architecture design, training data preparation, and training algorithm design—covering key parts of the AI research pipeline from model structure to data to training. Each task shares a set of characteristics that make autonomous research particularly challenging: limited available prior knowledge specific to the task, long iteration cycles, substantial implementation complexity, and experimental feedback that is indirect, multi-dimensional, and difficult to interpret.

4.1 Model Architecture Design

Task Formulation

Model architecture is a foundational component of AI systems, determining the capacity to model complex patterns, computational efficiency, and generalization. In this task, we focus on designing efficient sequence models through linear attention mechanisms. The quadratic complexity of standard Transformer attention (O(N²)) has motivated extensive research into sub-quadratic alternatives—including DeltaNet, Gated DeltaNet, Mamba, and RWKV—which achieve O(N) complexity by decomposing attention computations or maintaining compressed memory states.

Using DeltaNet as the baseline, the task requires the AI system to design novel attention layers with sub-quadratic complexity, employ chunk-wise computation patterns for efficient parallel training, and produce complete runnable implementations integrated into an existing large codebase.

Methodology

We initialize the cognition repository with approximately 150 entries extracted from 100 papers on linear attention, state space models, and efficient transformers, providing the system with domain priors from the outset. The database uses a periodically refreshed candidate pool that retains the top 50 highest-scoring nodes; each round samples its root architecture from the top 10 and draws reference context from the broader top 50.

Three targeted engineering adaptations improve runtime efficiency and constraint satisfaction:

- Static check agent: Intercepts each generated design before training, verifying complexity bounds, chunk-wise structure, and causal mask correctness.

- Debug agent: Handles runtime implementation errors by inspecting error traces and attempting targeted fixes.

- Novelty check: Filters duplicate proposals via motivation similarity, encouraging genuine exploration.

We adopt a multi-stage evaluation strategy to balance exploration efficiency with result reliability. In the exploration phase, small models (~20M parameters) are trained for 2000 steps on 1B tokens and evaluated on 10 core benchmarks. Candidates are scored via a composite fitness that combines quantitative metrics from loss and benchmark scores with LLM-as-a-Judge qualitative scores for code complexity, efficiency, and innovativeness.

In the verification phase, promising candidates are scaled to ~340M parameters and trained on 1B tokens to verify that their gains persist under scaling. The top architectures then undergo large-scale validation at ~1.3B parameters, trained on 100B tokens, with evaluation expanded to 16 benchmarks including 6 held-out OOD test sets.

Results

Over 1773 exploration rounds, 105 architectures surpassed the DeltaNet baseline in the verification phase. We selected 5 representative architectures spanning diverse design philosophies for large-scale validation. On development benchmarks, these architectures achieve up to 57.28% average accuracy compared to DeltaNet's 55.76%; on generalization benchmarks, they reach up to 45.40% versus DeltaNet's 44.74%, confirming that gains transfer beyond the training distribution.

Our best model achieves nearly 3× the gain of the current human-designed SOTA (Mamba2's +0.34 points over DeltaNet). This demonstrates that AI-driven evolution can discover architectures significantly outperforming human expert designs even in this high-saturation regime.

Analysis of Discovered Architectures

Analysis of the top 5 architectures reveals a consistent theme: moving beyond fixed allocation schemes toward adaptive, multi-scale routing that dynamically adjusts computational budget based on input content.

- PathGateFusionNet: Introduces hierarchical routing where a first-stage gate allocates budget between local and contextual processing, and a second stage distributes the contextual budget across short-range, long-range, and delta-rule update paths.

- ContentSharpRouter: Implements content-aware routing with learnable temperature parameters that prevent premature commitment to single pathways.

- FusionGatedFIRNet: Replaces softmax routing with independent sigmoid gates, allowing simultaneous activation of local and global paths alongside per-head retention parameters for the delta-rule memory path.

- HierGateNet: Employs two-stage gating with dynamic learnable floor values ensuring critical paths—especially the delta-path for long-range reasoning—never fully collapse.

- AdaMultiPathGateNet: Achieves token-level control via a unified BalancedSparseGate combining global, per-head, and per-token logits with entropy penalties preventing mode collapse.

4.2 Pretraining Data Curation

Task Formulation

In this task, the Evolve system must design category-specific curation strategies that improve pretraining data quality. Strategy design is inherently difficult: the strategy space is vast and discrete, encompassing choices of which operations to apply, how to specify decision criteria, and which quality issues to prioritize, with no clear mapping from design choices to effectiveness.

For each category, experts must examine data samples to identify issues, explore this combinatorial space to formulate candidate strategies, write detailed specifications, validate results, and iteratively refine, a process requiring significant effort per category. This challenge scales with corpus heterogeneity: modern pretraining corpora comprise hundreds of categories spanning domains, content types and quality levels, each demanding independent strategy design.

Methodology

We apply the ASI-Evolve framework to the pretraining data curation task. The cognition repository is initialized by examining sampled data from each category, storing identified quality issues such as HTML artifacts, incomplete fragments, formatting inconsistencies, and domain-specific noise patterns.

In each iteration, the Researcher retrieves relevant quality issues from the cognition repository and generates candidate curation strategies. The Engineer executes these strategies on 500 sampled documents, applying the specified operations to produce cleaned versions. The Analyzer evaluates 50 randomly selected (original, cleaned) pairs, scoring each on a 1-10 scale. The Analyzer also provides diagnostic feedback on coverage (which identified issues were addressed) and executability (instruction clarity and consistency).

Results

The system successfully designed effective strategies for all selected categories from Nemotron-CC spanning 672B tokens across academic content in mathematics, computer science, medicine, and other STEM fields. Applying the optimized strategies produces Nemotron-CC_ASI+ (504B tokens).

Training 3B-parameter models from scratch on 500B tokens and evaluating across 18 benchmarks, Nemotron-CC_ASI+ achieves 44.13 average score, surpassing raw data by 3.96 points and established corpora including DCLM, FineWeb-Edu, and Ultra-FineWeb under identical training budgets. Gains are particularly pronounced on knowledge-intensive tasks: MMLU +18.64 points, CSQA +18.80 points, MedQA +13.48 points.

Analysis

Across all categories, the system converges on cleaning-focused approaches without any prescriptive guidance on which operations to employ, consistently combining targeted noise removal (HTML artifacts, duplicates, PII), format normalization (whitespace, punctuation), and domain-aware preservation rules.

Effective strategies exhibit consistent design patterns: concrete criteria with measurable thresholds, targeted deletion of specific elements, and explicit preservation rules that prevent over-aggressive filtering. The 2.93-point gap between optimized and suboptimal strategies further illustrates the value of iterative refinement.

4.3 Reinforcement Learning Algorithm Design

Task Formulation

In this phase, we tasked the Evolve system with designing a novel Reinforcement Learning (RL) algorithm for Large Language Model (LLM) training. Using Group Relative Policy Optimization (GRPO) as the baseline, the objective was to redesign the mechanism for advantage allocation across sequences and the subsequent gradient computation. To succeed, the system was required to comprehend the mathematical foundations of RL, interpret diverse training metrics, and distinguish between stochastic training instability and genuine algorithmic improvements.

Methodology

We initialized the cognition repository with 10 high-quality papers published subsequent to GRPO, covering variance reduction techniques and KL-penalty modifications. These entries provided the system with a preliminary understanding of the current research frontier, constraining the search space toward plausible mathematical directions while avoiding theoretical dead ends.

We employed a two-stage validation protocol to balance computational cost and evaluation reliability. In the exploration phase, candidate algorithms were trained on a 4B parameter model for 150 steps and evaluated on 6 mathematics benchmarks. Promising candidates were then scaled to a 14B parameter model for 300 steps in the verification phase, with the evaluation suite expanded to Abstract Reasoning, STEM, Finance, and Coding domains to test generalization.

Results

Over the course of 300 evolutionary rounds, the system trained and evaluated a diverse array of policy gradient modifications, yielding 10 algorithms that outperformed the GRPO baseline in the exploration phase. Upon scaling to the 14B parameter verification phase, 3 algorithms demonstrated statistically significant improvements across all tested domains.

On mathematical benchmarks, the best evolved variants improve over GRPO by +12.5 points on AMC32 (67.5 → 80.0), +11.67 points on AIME24 (20.00 → 31.67), and +5.04 points on OlympiadBench (45.92 → 50.96), suggesting that the system can effectively optimize subtle mathematical trade-offs in loss function design.

Analysis of Discovered Algorithms

We highlight two representative high-performing algorithms that exhibit distinct theoretical innovations:

Algorithm A (Pairwise Asymmetric Optimization): Introduces a comparative advantage estimation: instead of using a group mean, the advantage for a response A is calculated by averaging the tanh-normalized pairwise reward differences against all other group samples. It further employs an asymmetric clipping mechanism that dynamically adjusts the PPO clipping window based on the sign of the advantage, and implements High-Impact Gradient Dropout, stochastically masking gradients for the most influential tokens to prevent overfitting to specific keywords.

Algorithm B (Budget-Constrained Dynamic Radius): Adopts percentile-based normalization for advantage calculation. Its core innovation is the Global Update Budget: the algorithm dynamically assigns each token a trusted update radius inversely proportional to the magnitude of its advantage, and strictly enforces an exponential bound, mathematically guaranteeing that the total policy update magnitude remains within a pre-defined budget and effectively stabilizing training on noisy data.

These two algorithms illustrate that ASI-Evolve can perform rigorous mathematical derivation and discover principled solutions to fundamental stability and variance challenges in RL training, paralleling innovations seen in human-designed algorithmic advances.

5. Empirical Analysis

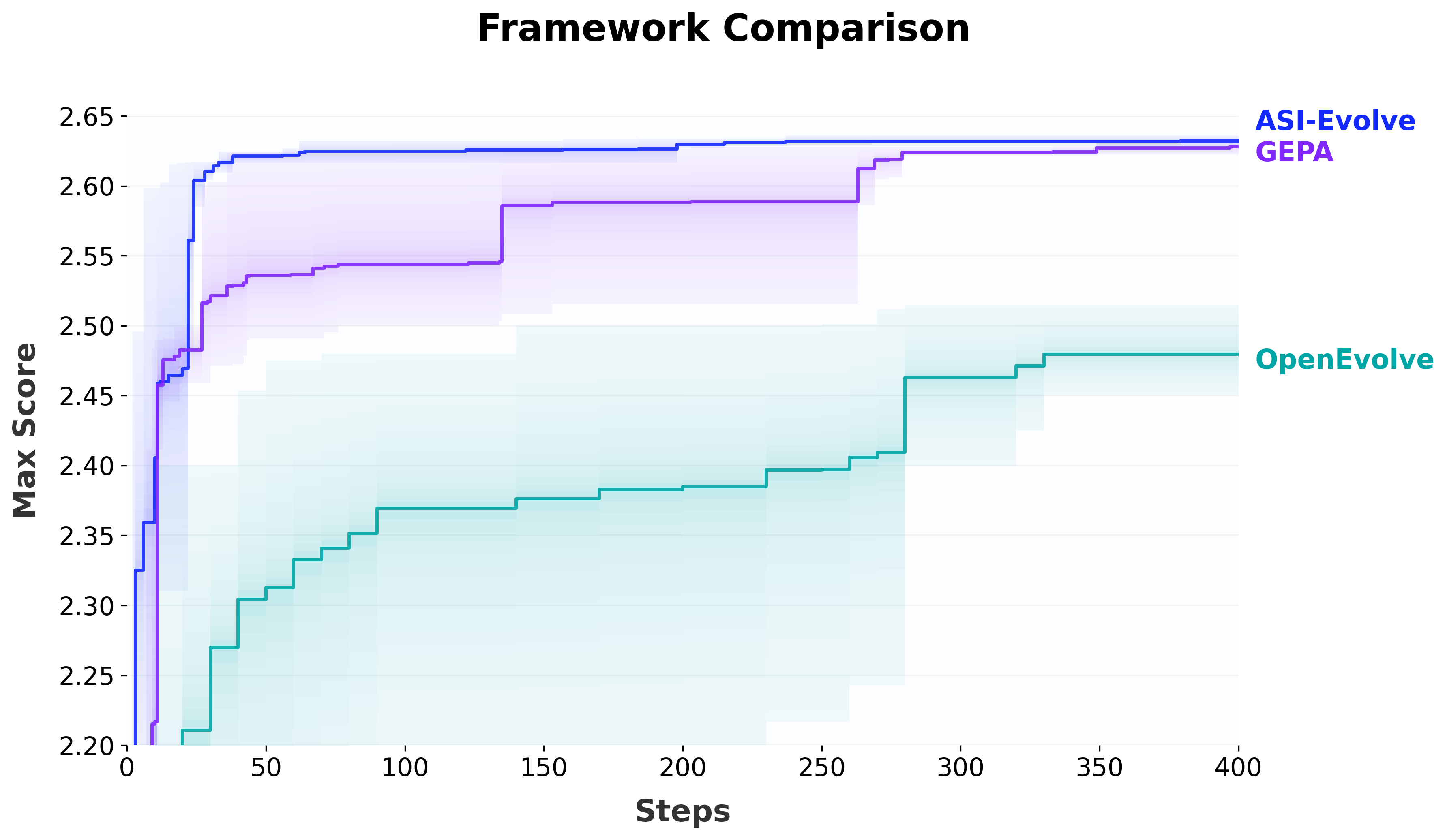

5.1 Benchmarking on Circle Packing

We use the circle packing task from AlphaEvolve as a controlled evaluation platform. The problem requires placing 26 circles within a 1×1 square to maximize the sum of their radii. This is a classic combinatorial optimization problem with low verification cost, yet it still demands non-trivial algorithm design and iterative refinement.

Key Results

Our ASI-Evolve reaches 2.63597 in as few as 17 steps—the fastest among all compared systems—and achieves a best score of 2.635983, comparable to the top results reported by other frameworks.

5.2 Comparison Experiments

Framework Comparison

Using Qwen3-32B as the base model, we compare ASI-Evolve against two representative evolutionary frameworks—OpenEvolve and GEPA—under an aligned prompt setup:

- OpenEvolve: Continues to evolve throughout the run, but exhibits high variance across independent runs and delivers only limited overall improvement, with scores plateauing well below the SOTA level.

- GEPA: Achieves competitive scores, converging to a range around 2.630. Its performance is substantially better than OpenEvolve, reflecting the benefit of structured evolutionary design.

- ASI-Evolve: Exits the cold-start phase with a noticeably higher score than both baselines, continues to improve steadily throughout the run, and is the only framework to reliably reach SOTA-level performance.

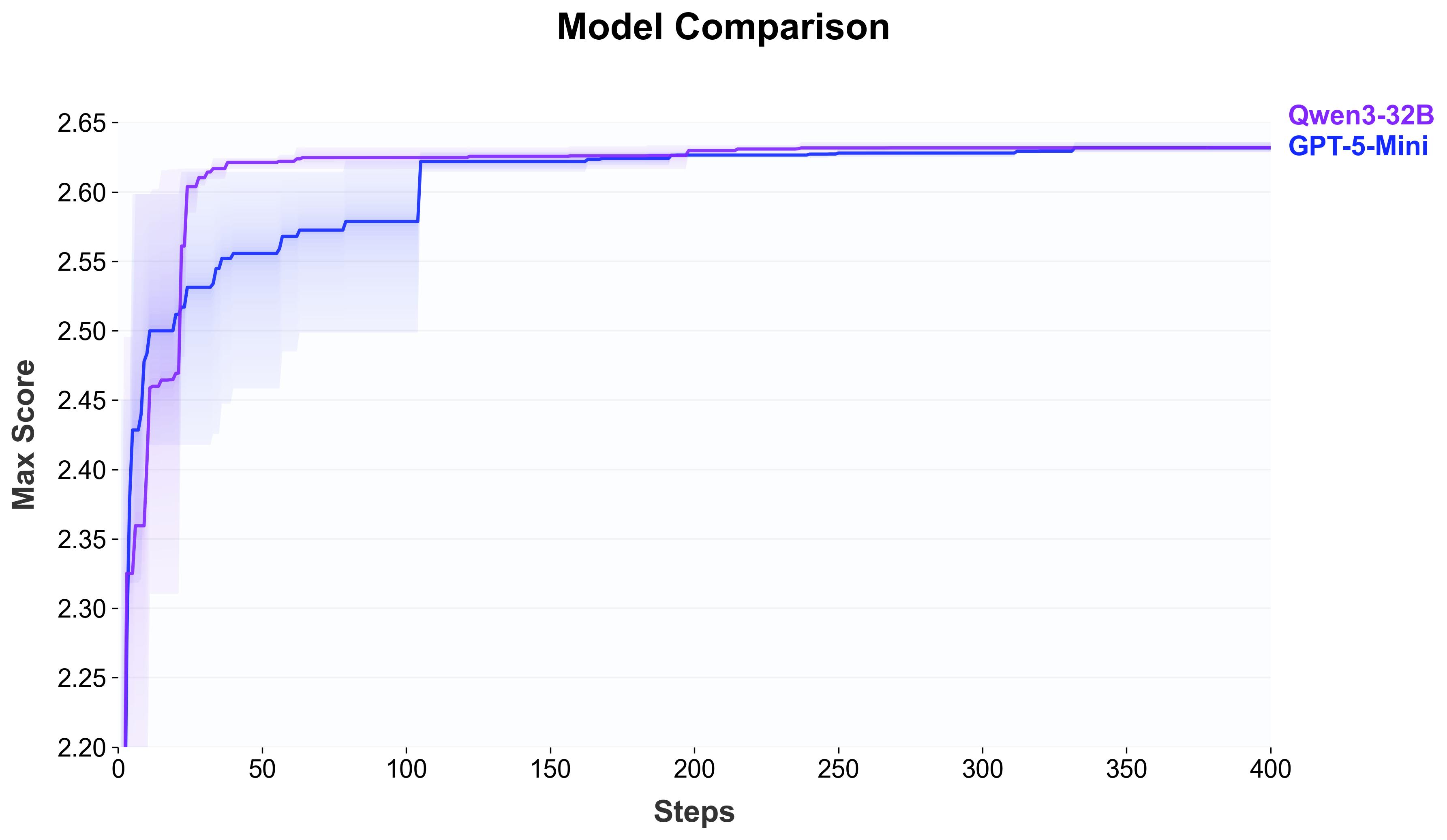

Model Comparison

We further run ASI-Evolve with GPT-5-mini and Qwen3-32B as the base model. The two runs converge to a similar range and exhibit highly consistent mid-to-late-stage improvement trends, indicating that the framework's evolution capability is not tied to a particular model family.

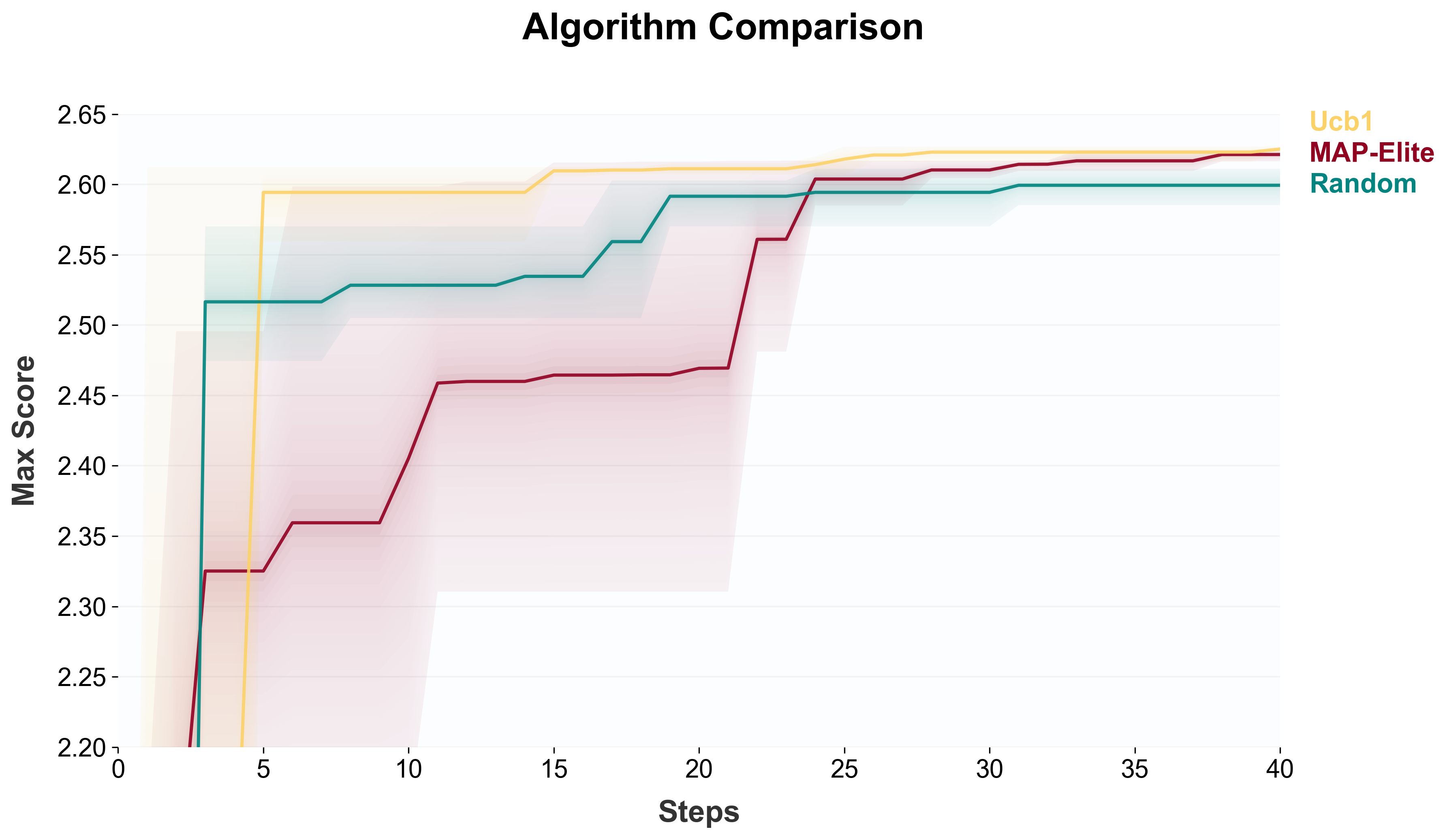

Algorithm Comparison

The database sampling algorithm determines how parent nodes are selected each round, directly shaping the balance between exploration and exploitation:

- MAP-Elites: Maintains a quality-diversity archive partitioned by behavioral features, actively preserving diverse niches to prevent premature convergence.

- UCB1: Treats each node as a bandit arm and selects based on an upper confidence bound that combines estimated value with an exploration bonus.

- Random: Selects parent nodes uniformly at random from the database, without any preference for score or diversity.

UCB1 reaches high-score regions faster than MAP-Elites and exhibits lower variance across runs. In the presence of cognition, the system can relax its dependence on diversity-preserving samplers and thereby achieve faster convergence. Notably, combining UCB1 with GPT-5-mini, the system discovered a circle-packing solution scoring 2.63597—matching the SOTA level—in just 17 steps.

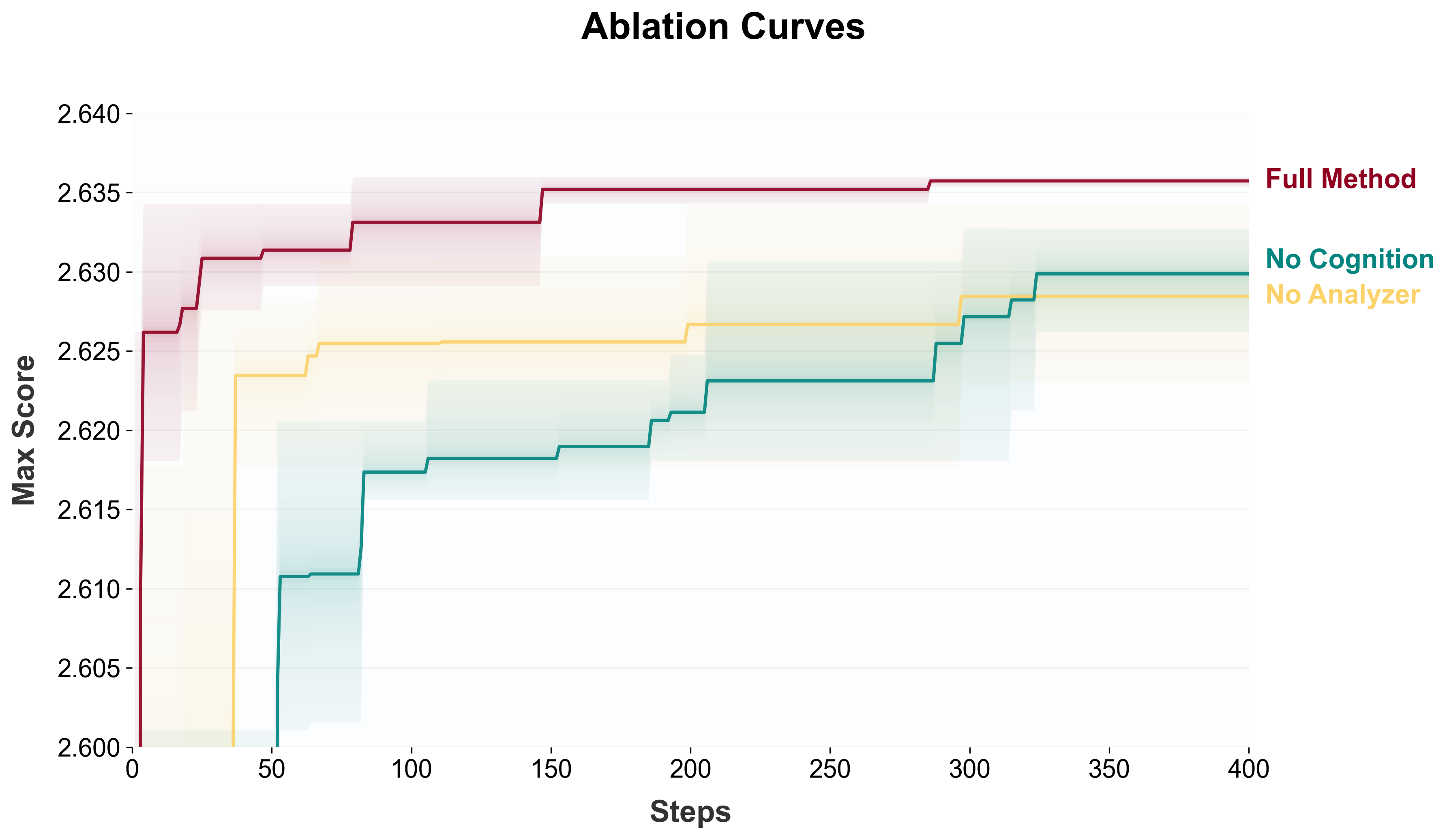

5.3 Ablation Study

We design controlled experiments to systematically evaluate key components of the ASI-Evolve framework:

- Full Method: ASI-Evolve with Analyzer, Cognition repository, and the complete four-stage loop.

- No Analyzer: Remove the Analyzer module. Raw evaluation scores and execution logs are stored directly in the Database.

- No Cognition: Remove the Cognition repository. The Researcher receives no literature-derived prior knowledge.

Impact of Removing Analyzer

Even without the Analyzer, the No Analyzer variant begins from a relatively high score in the early phase, attributable to the Cognition repository providing domain priors. However, it subsequently enters a prolonged plateau where further iterations yield only marginal gains. The ability to continuously push toward higher ceilings becomes markedly weaker.

Impact of Removing Cognition

The No Cognition variant exhibits a more pronounced cold-start cost: early improvements are slower and less stable. After sufficient effective experience is accumulated, the curve shows a noticeable jump and then gradually enters a higher-scoring, productive exploration regime. This matches the intended role of Cognition: it does not change the framework's core learning mechanism, but provides better priors to reduce unproductive exploration.

5.4 Real-World Applicability: Drug-Target Interaction Discovery

The experiments above confirm that ASI-Evolve delivers strong results on AI-for-AI tasks. Yet a legitimate concern persists: even if AI can effectively optimize AI systems, whether the resulting solutions are genuinely useful when deployed in the real world. To address this directly, we present results on drug-target interaction (DTI) prediction, where the architecture evolved by ASI-Evolve is applied to a biomedical task.

Task Formulation

We apply ASI-Evolve to Drug-Target Interaction (DTI) prediction, a central problem in AI-driven drug discovery. Effective DTI models must simultaneously capture modality-specific representations of drug molecules and protein targets, as well as their complex interaction patterns. We use DrugBAN as the seed architecture and aim to discover improved variants through automated architectural evolution.

Results

Our discovered architecture achieves consistent improvements over the DrugBAN baseline across most evaluation settings. On the BindingDB development set, we observe a substantial improvement of +1.91 AUROC points and +2.95 F1 points. Importantly, these improvements transfer to the test splits.

On cold-start scenarios where the model must generalize to completely unseen drugs or proteins, our architecture shows substantial generalization improvements: +6.94 AUROC points for unseen drugs, +3.56 points for unseen proteins, and +4.36 points in the doubly-cold-start setting. These improvements significantly exceed the in-distribution gains, suggesting that the evolved architecture has learned more robust and transferable representations of molecular interactions.

Analysis of Discovered Architecture

The best discovered architecture introduces three key innovations over DrugBAN:

- Sinkhorn Attention: Replacing standard bilinear attention with optimal-transport-based Sinkhorn iterations enforces doubly-stochastic constraints, ensuring balanced attention allocation between drug and protein features and preventing attention collapse.

- Domain-Specific Marginalization: Specialized marginalization over molecular substructures and protein domains aggregates interaction patterns across distinct semantic spaces, enabling more compositional modeling of binding mechanisms.

- Top-k Sparse Gating: Learnable top-k selection dynamically focuses on the most relevant interaction patterns, reducing noise from irrelevant molecular features.

These choices align with domain knowledge—optimal transport has theoretical connections to binding affinity, while compositional reasoning over substructures reflects established principles in medicinal chemistry.

6. Conclusion

In this paper, we presented ASI-Evolve, an agentic evolution framework that enables AI to carry out end-to-end autonomous scientific research. Through controlled comparisons against existing evolutionary baselines and systematic ablation studies, we verified that the framework design is effective: equipped with a structured cognition base and a dedicated analyzer, the system achieves rapid cold-start and sustains continuous improvement, reliably reaching SOTA-level results.

We further explored whether AI can accelerate its own research pipeline across each stage of the scientific process. The closed learn–design–experiment–analyze loop enables efficient self-improvement, and we demonstrate breakthroughs across three central components of AI development—model architecture, training data, and training algorithms—each posing substantial challenges in terms of implementation complexity, iteration cost, and indirect feedback.

Beyond the core AI pipeline, our drug-target interaction experiment demonstrates that model designs discovered through AI-driven research can be effectively deployed in real-world tasks, showing that AI-optimized solutions carry genuine scientific value.

Looking ahead, the scope of AI self-acceleration extends beyond individual models to the full AI development stack—architecture, data, algorithms, and infrastructure yet to be explored. As agentic systems take on more of the implementation and iteration work, human scientists can shift from being the executors of solutions to the definers of problems—concentrating their expertise on the questions that matter most and leaving the expansive search through hypothesis spaces to AI. We expect this paradigm to drive not only the self-improvement of individual models, but the self-evolution of the entire AI field.